The next DataPhilly meetup will feature a medley of machine-learning talks, including an Intro to ML from yours truly. Check out the speakers list and be sure to RSVP. Hope to see you there!

Thursday, February 18, 2016

6:00 PM to 9:00 PM

Speakers:

- Corey Chivers

- Randy Olson

- Austin Rochford

Abstract: Corey will present a brief introduction to machine learning. In his talk he will demystify what is often seen as a dark art. Corey will describe how we “teach” machines to learn patterns from examples by breaking the process into its easy-to-understand component parts. By using examples from fields as diverse as biology, health-care, astrophysics, and NBA basketball, Corey will show how data (both big and small) is used to teach machines to predict the future so we can make better decisions.

Bio: Corey Chivers is a Senior Data Scientist at Penn Medicine where he is building machine learning systems to improve patient outcomes by providing real-time predictive applications that empower clinicians to identify at risk individuals. When he’s not pouring over data, he’s likely to be found cycling around his adoptive city of Philadelphia or blogging about all things probability and data at bayesianbiologist.com.

Randy Olson (University of Pennsylvania Institute for Biomedical Informatics):

Automating data science through tree-based pipeline optimization

Abstract: Over the past decade, data science and machine learning has grown from a mysterious art form to a staple tool across a variety of fields in business, academia, and government. In this talk, I’m going to introduce the concept of tree-based pipeline optimization for automating one of the most tedious parts of machine learning — pipeline design. All of the work presented in this talk is based on the open source Tree-based Pipeline Optimization Tool (TPOT), which is available on GitHub at https://github.com/rhiever/tpot.

Bio: Randy Olson is an artificial intelligence researcher at the University of Pennsylvania Institute for Biomedical Informatics, where he develops state-of-the-art machine learning algorithms to solve biomedical problems. He regularly writes about his latest adventures in data science at RandalOlson.com/blog, and tweets about the latest data science news at http://twitter.com/randal_olson.

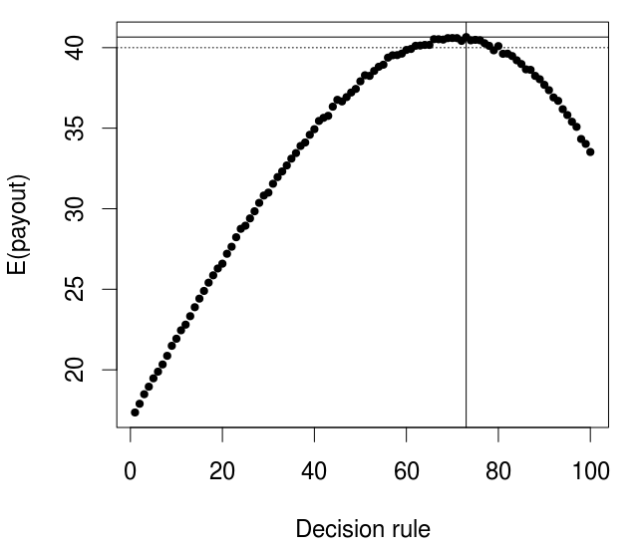

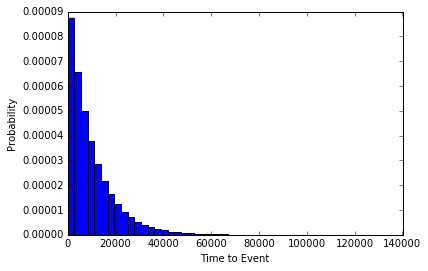

Abstract: Bayesian optimization is a technique for finding the extrema of functions which are expensive, difficult, or time-consuming to evaluate. It has many applications to optimizing the hyperparameters of machine learning models, optimizing the inputs to real-world experiments and processes, etc. This talk will introduce the Gaussian process approach to Bayesian optimization, with sample code in Python.

Bio: Austin Rochford is a Data Scientist at Monetate. He is a former mathematician who is interested in Bayesian nonparametrics, multilevel models, probabilistic programming, and efficient Bayesian computation.

to·di·dact n.

to·di·dact n. re, simul

re, simul