The theme for this year’s workshop will be “Moving beyond supervised learning in healthcare”. This will be a great forum for those who work on computational solutions to the challenges facing clinical medicine. The submission deadline is Friday Oct 26, 2018. Hope to see you there!

Tag Archives: learning

Generative Adversarial Networks are the hotness at NIPS 2016

While they hit the scene two years ago, Generative Adversarial Networks (GANs) have become the darlings of this year’s NIPS conference. The term “Generative Adversarial” appears 170 times in the conference program. So far I’ve seen talks demonstrating their utility in everything from generating realistic images, predicting and filling in missing video segments, rooms, maps, and objects of various sorts. They are even being applied to the world of high energy particle physics, pushing the state of the art of inference within the language of quantum field theory.

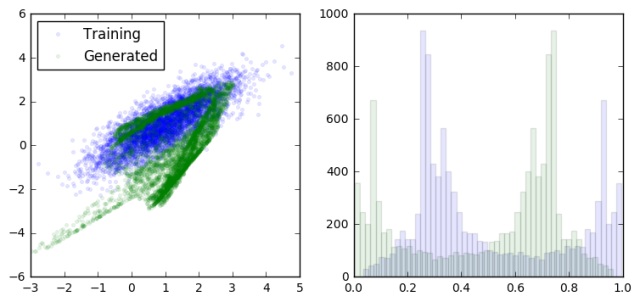

The basic idea is to build two models and to pit them against each other (hence the adversarial part). The generative model takes random inputs and tries to generate output data that “look like” real data. The discriminative model takes as input data from both the generative model and real data and tries to correctly distinguish between them. By updating each model in turn iteratively, we hope to reach an equilibrium where neither the discriminator nor the generator can improve. At this point the generator is doing it’s best to fool the discriminator, and the discriminator is doing it’s best not to be fooled. The result (if everything goes well) is a generative model which, given some random inputs, will output data which appears to be a plausible sample from your dataset (eg cat faces).

As with any concept that I’m trying to wrap my head around, I took a moment to create a toy example of a GAN to try to get a feel for what is going on.

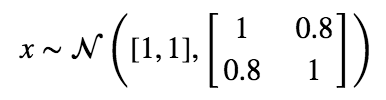

Let’s start with a simple distribution from which to draw our “real” data from.

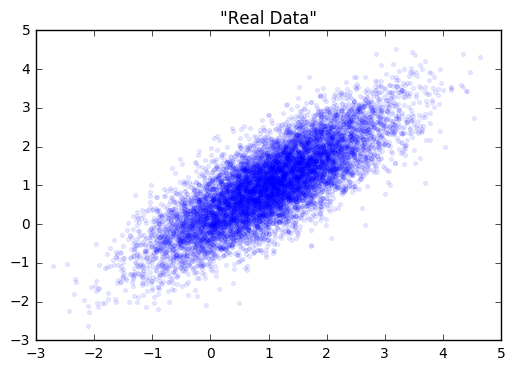

Next, we’ll create our generator and discriminator networks using tensorflow. Each will be a three layer, fully connected network with relu’s in the hidden layers. The loss function for the generative model is -1(loss function of discriminative). This is the adversarial part. The generator does better as the discriminator does worse. I’ve put the code for building this toy example here.

Next, we’ll fit each model in turn. Note in the code that we gave each optimizer a list of variables to update via gradient descent. This is because we don’t want to update the weights of the discriminator while we’re updating the weights of the generator, and visa versa.

loss at step 0: discriminative: 11.650652, generative: -9.347455

loss at step 200: discriminative: 8.815780, generative: -9.117246

loss at step 400: discriminative: 8.826855, generative: -9.462300

loss at step 600: discriminative: 8.893397, generative: -9.835464

Simudidactic

au to·di·dact n.

to·di·dact n.

A self-taught person.

From Greek autodidaktos, self-taught : auto-, auto- + didaktos, taught;

+

sim·u·late v.

To create a representation or model of (a physical system or particular situation, for example).

From Latin simul re, simul

re, simul t-, from similis, like;

t-, from similis, like;

=

(If you can get past the mixing of Latin and Greek roots)

sim·u·di·dactic adj.

To learn by creating a representation or model of a physical system or particular situation. Particularly, using in silico computation to understand complex systems and phenomena.

———————————————————————

This concept has been floating around in my head for a little while. I’ve written before on how I believe that simulation can be used to improve one’s understanding of just about anything, but have never had a nice shorthand for this process.

Simudidactic inquiry is the process of understanding aspects of the world by abstracting them into a computational model, then conducting experiments in this model world by changing the underlying properties and parameters. In this way, one can ask questions like:

- What type of observations might we make if x were true?

- If my model of the process is accurate, can I recapture the underlying parameters given the type of observations I can make in the real world? How often will I be wrong?

- Will I be able to distinguish between competing models given the observations I can make in the real world?

In addition to being able to ask these types of questions, the simudidact solidifies their understanding of the model by actually building it.

So go on, get simudidactic and learn via simulation!