In today’s XKCD, a pair of (presumably) physicists are told by their neutrino detector that the sun has gone nova. Problem is, the machine rolls two dice and if they both come up six it lies, otherwise it tells the truth.

The Frequentist reasons that the probability of obtaining this result if the sun had not, infact, gone nova is 1/36 (0.027, p<0.05) and concludes that the sun is exploding. Goodbye cruel world.

The Bayesian sees things a little differently, and bets the Frequentist $50 that he is wrong.

Let’s set aside the obvious supremacy of the Bayesian’s position due to the fact that were he to turn out to be wrong, come the fast approaching sun-up-to-end-all-sun-ups, he would have very little use for that fifty bucks anyway.

What prior probability would we have to ascribe to the sun succumbing to cataclysmic nuclear explosion on any given night in order to take the Bayesian’s bet?

Not surprisingly, we’ll need to use Bayes!

Where M is the machine reading a solar explosion and S is the event of an actual solar explosion.

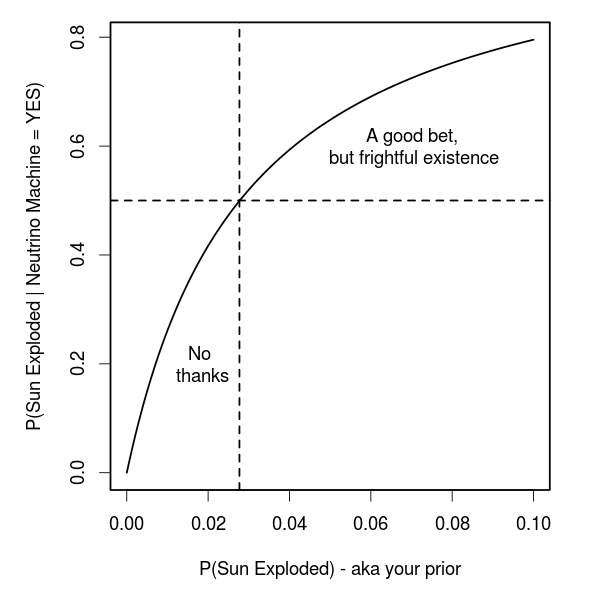

Assuming we are risk neutral, and we take any bet with an expected value greater than the cost, we will take the Bayesian’s bet if P(S|M)>0.5. At this cutoff, the expected value is 0.5*0+0.5*100=50 and hence the bet is worth at least the cost of entry.

The rub is that this value depends on our prior. That is, the prior probability that we ascribe to global annihilation by complete solar nuclear fusion. We can set P(S|M)=0.5 and solve for P(S) to get the threshold value for a prior that would make the bet a good one (ie not a Dutch book). This turns out to be:

Which is ~0.0277 — the Frequentist’s p-value!

So, assuming 1:1 payout odds on the bet, we should only take it if we already thought that there was at least a 2.7% chance that the sun would explode, before even asking the neutrino detector. From this, we can also see what odds we would be willing to take on the bet for any level of prior belief about the end of the world.

sun_explode<-function(P_S)

{

P_MgS<-35/36

P_MgNS<-1/36

P_NS<-1-P_S

P_SgM<-(P_MgS*P_S)/(P_MgS*P_S + P_MgNS*P_NS)

return(P_SgM)

}

par(cex=1.3,lwd=2,mar=c(5,5,1,2))

curve(sun_explode(x),

xlim=c(0,0.1),

ylab='P(Sun Exploded | Neutrino Machine = YES)',

xlab='P(Sun Exploded) - aka your prior')

text(0.018,0.2,'No\n thanks')

text(0.07,0.6,'A good bet,\n but frightful existence')

abline(h=0.5,lty=2)

abline(v=0.0277,lty=2)

As an astronomer, my prior says that it is not possible for the sun to go nova during my lifetime. I suppose I ought to make it epsilon to keep honest, but my epsilon would be very, very small.

There are very few things that I would place a 0 prior on. If you do, then no amount of evidence will change your position. Like you, I’d go with a very small epsilon on this one though.

This is a fun little exercise, and like most exercises, it’s oversimplified. Taking a more real-world approach, I think there is an important probability that is not being accounted for: the probability that the machine’s algorithm is correct.

Regardless of the method for determining the machine’s intent to lie or not lie, the conclusion imparted by the machine has a probability of being correct or incorrect, and that is a much meaner beast to estimate. In reality, either physicist would assume the sun was not going nova, and the problem would become:

Is the machine (a) lying, (b) inaccurate, or (c) both?

Thanks for the post, a nice example.

Okay, but… You can be a frequentist but still use bayes theorem. Bayesians treat unknown fixed variables as random variables pulled from a distribution of one’s beliefs, whereas frequentists treat them as, well, fixed. There are benefits on both sides. But this example has little to do with that. It has to do with using Bayes Theorem vs. being silly!

Just because Bayes Theorem is called Bayes Theorem doesn’t mean frequentists are stupid and can’t use it when it’s clearly the right thing to do! Bayesian Statistics ≠ Bayes Theorem. Frequentism includes Bayes Theorem.

I agree, Hillary! I was just having some fun with Randall’s caricatures. He has written a response to the uproar from statisticians of both camps. http://andrewgelman.com/2012/11/16808/#comment-109366

Thanks for the note.

Priors can come from physical knowledge, as bayesrules says, or they can be based upon some frequentist estimate, or different algorithm. The key insight of Bayesian inference is the commitment to updating by data. Start with a “bad prior”, take enough data, and the prior rapidly becomes irrelevant. No doubt there are some likelihood models where this does not work well, where the prior dominates, but inference depends upon that likelihood model and if it’s lousy, you’re stuck with the prior.

Yep. I made a visualization of this process based on two observers with different priors converging as they observe more data.

That’s awesome, Corey! Thanks for pointing it out.

The problem here is that the p-value for this problem is not 1/36. Notice that, we have the following two hypotheses, namely

H0: The Sun didn’t explode,

H1: The Sun exploded.

Then,

p-value = P(“the machine returns yes”, when the Sun didn’t explode).

Now, note that the event

“the machine returns yes”

is equivalent to

“the neutrino detector measures the Sun exploding AND tells the true result” OR “the neutrino detector does not measure the Sun exploding AND lies to us”.

Assuming that the dice throwing is independent of the neutrino detector measurement, we can compute the p-value. First define:

p0 = P(“the neutrino detector measures the Sun exploding”, when the Sun didn’t explode),

then the p-value is

p-value = p0*35/36 + (1-p0)*1/36

=> p-value = (1/36)*(35*p0 + 1 – p0)

=> p-value = (1/36)*(1+34*p0).

If p0 = 0, then we are considering that the detector machine will never measure that “the Sun just exploded”. The value p0 is obviously incomputable, therefore, a classical statistician that knows how to compute a p-value would never say that the Sun just exploded. By the way, the cartoon is funny.

Moreover, this test is inadmissible since it is based on an ancillary statistic.

What we have to keep in mind is that the classical modeling is so vast* and full of possibilities that many types of bizarre conclusions can arise when we are not concerned about optimal properties. We could restrict ourselves only to tests based on the likelihood ratio statistics or other optimal procedure.

Notice that, Bayesians use the likelihood function and, in addition, represent prior information by probability distributions, so the set of possibilities to build a test is restrict. On the other hand, classical statisticians also have the option of using the likelihood function (but is not mandatory), they implicitly represent prior information by possibility distributions, so the set of possibilities to build a test is sensibly bigger than the Bayesian procedure.

*We can model by using likelihoods, pseudo-likelihoods, matching moments, estimating equations and so forth.