The excellent BBC podcast More or Less does a great job at communicating and demystifying statistics in the news to a general audience. While listening to the most recent episode (Is Salt Bad for You? 19 Aug 2011), I was pleased to hear the host offer a clear, albeit incomplete, explanation of p-values, as reported in scientific studies like the ones being discussed in the episode. I was disappointed, however, to hear him go on to forward an all too common fallacious extension of their interpretation. I count the show’s host, Tim Harford, among the best when it comes to statistical interpretation, and really feel that his work has improved public understanding, but it would appear that even the best of us can fall victim to this little trap.

The trap:

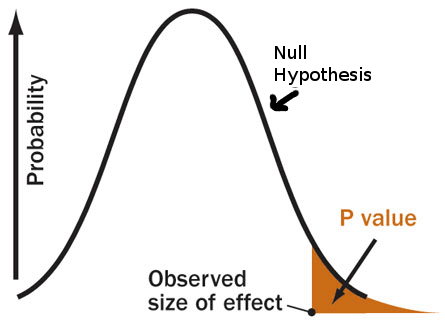

When conducting Frequentist null hypothesis significance testing, the p-value represents the probability that we would observe a result as extreme or more (this was left out of the loose definition in the podcast) than our result IF the null hypothesis were true. So, obtaining a very small p-value implies that our result is very unlikely under the null hypothesis. From this, our logic extends to the decision statement:

“If the data is unlikely under the null hypothesis, then either we observed a low probability event, or it must be that the null hypothesis is not true.”

It is important to note that only one of these options can be correct. The p-value tells us something about the likelihood of the data, in a world where the null hypothesis is true. If we choose to believe the first option, the p-value has direct meaning as per the definition above. However, if we choose to believe the second option (which is traditionally done when p<0.05), we now believe in a world where the null hypothesis is not true. The p-value is never a statement about the probability of hypotheses, but rather is a statement about data under hypothetical assumptions. Since the p-value is a statement about data when the null is true, it cannot be a statement about the data when the null is not true.

How does this pertain to what was said in the episode? The host stated that:

How does this pertain to what was said in the episode? The host stated that:

“…you could be 93% confident that the results didn’t happen by chance, and still not reach statistical significance.”

Referring to the case where you have observed a p-value of 0.07. The implication is that you would have 1-p=0.93 probability that the null is not true (ie that your observations are not the result of chance alone). From the discussion above, we can begin to see why this cannot be the case. The p-value is a statement about your results only when the null hypothesis is true, and therefor cannot be a statement about the probability that it is false!

An example:

With our complete definition of the p-value in mind, lets look at an example. Consider a hypothetical study similar to those analyzed by the Cochrane group, in which a thousand or so individuals participated. In this hypothetical study, you observe no mean difference in mortality between the high salt and low salt groups. Such a result would lead to a p-value of 0.5, or 50%. Meaning that if there is no real effect, there is a 50% chance that you would observe a result greater than zero, however slightly. Using the incorrect logic, you would say:

“I am 50% confident that the results are not due to chance.”

Or, in other words, that there is a 50% probability that there is some adverse effect of salt. This may seem reasonable at first blush, however, consider now another hypothetical study in which you have recruited just about every adult in the population, (maybe you’re giving away iPads or something), and again you observe zero mean difference in mortality between groups. You would once again have a p-value of 0.5, and might again erroneously state that you are 50% confident that there is an effect. After some thought, however, you would conclude that the second study provided you with more confidence about whether or not there is any effect than did the first, by virtue of having measured so many people, and yet your erroneous interpretation of the p-value tells you that your confidence is the same.

The solution:

I have heard this fallacious interpretation of p-values everywhere from my undergraduate Biometry students, to highly reputable peer reviewed research publications. Why is this error so prevalent? It seems to me that the issue lies in the fact that what we really want to be able to say is not a statement about our results under the assumption of no effect (the p-value), but rather a statement about hypotheses given our results (which we do not get directly through the p-value).

One solution to this problem lies in a statistical concept known as power. Power is the calculation of how likely it is that you would observe a p-value below some critical value (usually the canonical 0.05), for both a given sample size and the size of the effect that you wish to detect. The smaller the size of the real effect that you wish to measure, the higher the sample size required if you want to have a high probability of finding statistical significance.

This is why it is important to distinguish, as was done in the episode, between statistical significance, and biological, or practical significance. A study may have high power, due to a large sample size, and this can lead to statistically significant results, even for very small biological effects. Alternatively the study may have low power, in which case it may not find statistically significant results, even if there is indeed some real biologically relevant effect present.

Another solution is to switch to a Bayesian perspective. Bayesian methods allow us to make direct statements about what we are really interested in – namely, the probability that there is some effect (general hypotheses), as well as the probability of the strength of that effect (specific hypotheses).

In short:

What we really want is the probability of hypotheses given our data (written as P(H | D) ), which we can obtain by applying Bayes rule.

What we get from a p-value is the probability of observing something as extreme or more than our data, under the null hypothesis ( written as P(x>=D | Ho) ). Isn’t that awkward? No wonder it is so commonly misrepresented.

So, my word of caution is this: We have to remember that the p-value is only a statement of the likelihood of making an observation as extreme or more than your observation, if there is, in fact, no real effect present. We must be careful not to perform the tempting, but erroneous, logical inversion of using it to represent the probability that a hypothesis is, or is not true. An easy little catch phrase to remember this is:

“A lack of evidence for something is not a stack of evidence against it.”

There is a better catchphrase:

“Absence of evidence is not evidence of absence.”

Thanks, Alex. You’re right, that is a better catch phrase. It has a symmetry that makes it more memorable too 🙂

It is a cute catch-phrase, but it is wrong, as is your catch-phrase, taken literally. I am a hardcore Bayesian, and I hate the prosecutor’s (p-value) fallacy as much as the next person, but it is a simple consequence of Bayes’ theorem that absence of evidence most certainly is evidence of absence. What is the evidence that dodos are extinct? Is it not the absence of evidence that they exist? I’m sure John D Cook had a nice blog post about this. This is the reason that frequentist statistics, though fundamentally flawed, is not completely stupid.

Consider these two situations:

1) you have looked repeatedly in a great many places, and found no dodos.

2) you have looked only once, in your back yard, and found no dodos.

In the first situation, you have a great deal of evidence to suggest that dodos do not exist, given the number of observed absences you have made. In the 2nd case, you have very little evidence of absence, but this does not lead you to strongly believe in a presence of dodos, generally.

I don’t understand your premise. It seems that you are conflating the absence of an effect with absence of evidence of an effect. Perhaps you should rethink.

I also never suggested that frequentist statistics was completely stupid. I intended simply to highlight the dangers of misinterpreting the meaning of the p-value.

http://www.johndcook.com/blog/2011/02/22/absence-of-evidence/

http://lesswrong.com/lw/ih/absence_of_evidence_is_evidence_of_absence/

Thank you for this, your writing is very lucid (though I still have to go back and think more about power and the Bayesian approach. I teach Economics and Mathematics to A levels students and unfortunately having had little education in Statistics and that the Stats I am now required to teach is so awful that I have forgotten some the important ideas you discuss. I keep promising to go back and think them through again – but that’s the thing with Statistics, you do keep having to think the ideas through step by step. By comparison calculus is easy.

I was very struck my a TED talk by Arthur Benjamin, http://www.ted.com/talks/lang/eng/arthur_benjamin_s_formula_for_changing_math_education.html which you may not have seen but he captures a great failing with pre-university education, certainly in the UK and US; it’s only 3 minutes long.

Was listening to a great podcast the other night about Bayesian stats and their origin in gambling. Very interesting xx

I would like to know what p-cast that was. It sounds interesting – I’m always looking for an interesting way to get students engaged in Bayesian reasoning.

Pingback: Thoughts on Statistics « Economics and Statistics Confuse Me

Pingback: P-value Fallacy on More or Less – Follow up « bayesianbiologist

What do you mean by “extreme”, as in, ” the p-value represents the probability that we would observe a result as extreme or more”? I have difficulty wrapping this around my head while trying to understand the rest of the content. I keep thinking that the data needs some kind of ordering and “extreme” is a kind of comparator such as “larger than”.

That’s a great question, and I think that this is something that causes a lot of people problems when they try to make sense of this. To think about what ‘more extreme’ means in this setting, we have to start by thinking about what we are measuring. In the salt studies, one of the metrics that was being looked at was the difference in blood pressure between the high and low salt intake groups. So in this case, a mean difference of zero would be our null hypothesis (no difference between groups). Any observed mean difference greater than (or less than) zero would be ‘more extreme’ than zero. If we observed a mean difference of 5mmHg between the high and low salt groups (the high salt group was, on average, 5mmHg hypertensive), we would construct our p-value based on the probability of observing a difference of 5 or greater (ie ‘more extreme’), if the true (population) difference were zero (the null hypothesis).

The reason that we cannot use the probability of the exact observation (in the above example, a difference of 5mmHg) is due to the peculiarities of continuous variables. In the continuous setting, since there are infinitely many possible outcomes (think 5.999998, and 5.999999, etc.), the probability of any one outcome is actually zero. You may have to pull out your old first year calculus text book to remind yourself why this is so. Instead, we calculate the probability of observing a result at least as far from the null hypothesis as our observations (see the yellow area in the figure in the main post). The use of ‘as extreme’ is simply a handy shorthand for the fact that any real effect may be positive or negative. We could equivalently say that the p-value is the probability of observing an absolute difference from the null hypothesis as great, or greater than the data we have observed.

this is a great explanation (infinitely many possible outcomes), that clicked the whole thing in for me. In general – a good page. And I also often catch myself “falling into a trap” of assigning the p values to the hypothesis rather then to the data – and I am supposedly a bioinformatics specialist, publishing papers on applying Bayesian method to analyzing genetic association data… 😉 thank you!

Relatively few students in UK schools learn much about significance testing. Of those who do, the contexts they work in are binomial and Poisson distributions (I’ve no idea why, I think normal and t are much easy to understand).

Anyway, I usually have a little fun with the binomial testing. I arrange for a student (not a mathematician) to interrupt the start of my lesson by claiming to be a mind reader. They explain that they can do a simple test. Another students writes heads or tails on a piece of paper and the mind reader will attempt to guess what has been written down. The mind reader then disappears before we can try the test.

The question to the class then is how should we carry out this test and what we come down to is producing critical region of eight (if memory serves) or more guesses in ten correct. If we assume that the student cannot read minds and the probability of a correct guess is 50%, then the probability or getting eight or more is less than 5%.

The eight or more thing still sits a little uneasily with me. The probability of getting exactly eight guesses correct is a smaller number and hence an easier hurdle to jump. But by adding the possibilities of getting 9 or 10 correct which are also evidence of mind reading we make the hurdle higher. Mind, I suppose the probability of getting any specific number of correct guesses will be smaller than five percent.

“Since the p-value is a statement about data when the null is true, it cannot be a statement about the data when the null is not true.” — Excellent! Everyone involved in scientific analysis or communication must be able to understand this bit.

Pingback: 81st Carnival of Mathematics | Wild About Math!

Pingback: The Professor, the Bikini Model and the 5 Sigma Mistake | bayesianbiologist

Pingback: Another comment on p-values | Mathematics in everyday life

Thanks for your explanation. I’m an investor in a small biotech company, and they keep reporting p-values for their ongoing clinical trials of their development-stage unapproved cancer drug. If the company does not state whether their reported p-values are 1-sided or 2-sided is there a way to infer which one they’re talking about? (Is one standard?)

Pingback: P-value fallacy alive and well: Latest case in the Journal Nature | bayesianbiologist

Pingback: Follow up to Johnson et al Post | bayesianbiologist

How is the p-value of 50% calculated when the mean difference between the groups is 0? it makes sense intuitively, but it seems to imply that the max. value of a p-value is 50%, which from what I google seems not to be the case? Does it relate to the double tailed distribution?

Yep! For a two-sided test you would get 100%.